Data scientists believe that Spark executes 100 times faster than MapReduce as it can cache data in memory whereas MapReduce works more by reading and writing on disks. It was developed to overcome the limitations in the MapReduce paradigm of Hadoop. It is a general-purpose cluster computing system that provides high-level APIs in Scala, Python, Java, and R.

INSTALL APACHE SPARK ON WINDOWS 7 SOFTWARE

It is a data processing engine hosted at the vendor-independent Apache Software Foundation to work on large data sets or big data. : Īt .ql.(SessionState.java:522)Īt .(ClientWrapper.scala:194)Īt .(IsolatedClientLoader.Spark is an open-source framework for running analytics applications. :1fc6%eth9, but we couldn't find any external IP address! T enabled so recording the schema version 1.2.0ġ6/02/24 12:06:06 WARN ObjectStore: Failed to get database default, returning NoSuchObjectExceptionġ6/02/24 12:06:07 WARN : Your hostname, TORL2413 resolves to a loopback/non-reachable address: fe80:0:0:0:45b5:a994:a3fa 3.2.10.jar" is already registered, and you are trying to register an identical plugin located at URL "file:/C:/IT_CodeRĮpo/BigData/spark/lib/datanucleus-core-3.2.10.jar."ġ6/02/24 12:06:02 WARN Connection: BoneCP specified but not present in CLASSPATH (or one of dependencies)ġ6/02/24 12:06:06 WARN ObjectStore: Version information not found in metastore. The URL "file:/C:/IT_CodeRepo/BigData/spark/bin/./lib/datanucleus-core Ensure you dont have multiple JĪR versions of the same plugin in the classpath. jdo-3.2.6.jar" is already registered, and you are trying to register an identical plugin located at URL "file:/C:/IT_CoĭeRepo/BigData/spark/bin/./lib/datanucleus-api-jdo-3.2.6.jar."ġ6/02/24 12:06:02 WARN General: Plugin (Bundle) "org.datanucleus" is already registered. The URL "file:/C:/IT_CodeRepo/BigData/spark/lib/datanucleus-api Ltiple JAR versions of the same plugin in the classpath. rdbms-3.2.9.jar" is already registered, and you are trying to register an identical plugin located at URL "file:/C:/IT_ĬodeRepo/BigData/spark/bin/./lib/datanucleus-rdbms-3.2.9.jar."ġ6/02/24 12:06:02 WARN General: Plugin (Bundle) "" is already registered. The URL "file:/C:/IT_CodeRepo/BigData/spark/lib/datanucleus Ensure you dont havĮ multiple JAR versions of the same plugin in the classpath. Are you able to help ! Thanks.ġ6/02/24 12:06:02 WARN General: Plugin (Bundle) "" is already registered.

INSTALL APACHE SPARK ON WINDOWS 7 64 BIT

Sangeet, I'm running Spark 1.6.0 Pre-built for Hadoop 2.6 and later standalone (without Hadoop) on Windows 7 64 Bit and get the following error. Explore other related data sets at this Link Next time just type myspark on command line to open pyspark with CSV package.ĭownload larger Movie/Ratings data sets to slice n dice data in different ways and evaluate performance (memory/cpu) implications when Data is cached vs not cached.

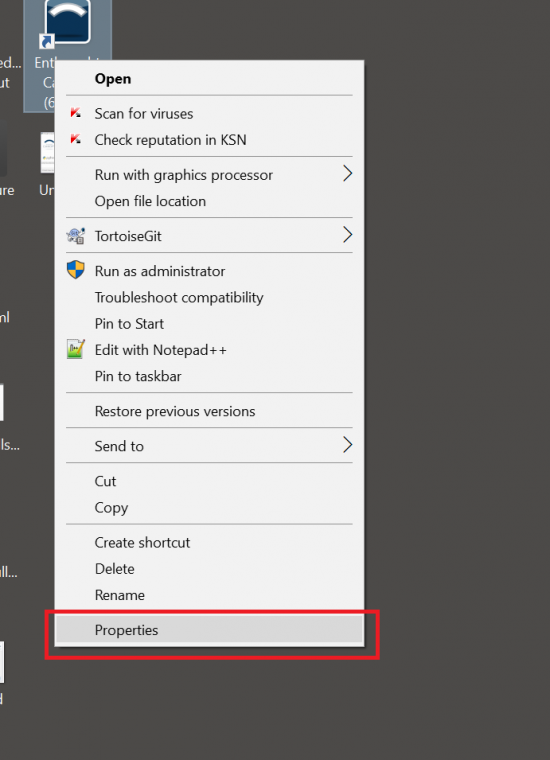

( ratings.cache() to put in Memory )Ĭreate C:\BigData\Spark\bin\myspark.bat with pyspark -packages com.databricks:spark-csv_2.11:1.3.0 in it. Ratings.unpersist() - to remove from Cache. and hit Tab key will list all commands, options. If \tmp\hive permissions are not set properly, you may receive error like this ( : : The root scratch dir: /t mp/hive on HDFS should be writable.

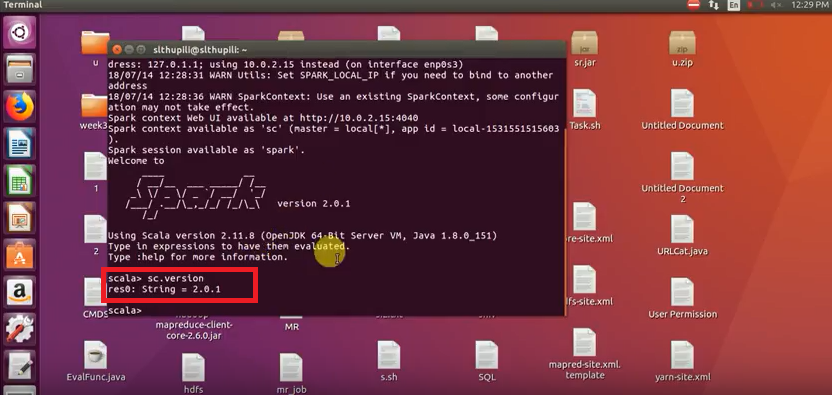

In that case try to run winutils and pyspark command from D:\ prompt. If you see any errors, then probably you may not have proper access to C:\ drive (especially work laptop that has restrictions to C:\ drive. Winutils.exe ls \tmp\hive : This command on windows Command propmt will display access level to \tmp\hive folder. Sc.appName="myFirstApp" - appName appears in Jobs, easy to differentiate. SqlContext._get_hive_ctx() - If this runs clean with no errors, then Winutils.exe version, HADOOP_HOME path etc is correct. Some more troubleshooting commands/tips :. TopMovieNames=sqlContext.sql("Select movies.c1 as MovieName, count(*) as Cnt from ratings, movies where ratings.c1=movies.c0 group by movies.c1 Order by Cnt desc")